Build vs buy software in 2026: a decision framework for CTOs

An opinionated build vs buy decision framework for CTOs at 50-300 person B2B companies. Score strategic importance, differentiation, five-year total cost, and team capacity, with a worked example for a 120-person operations system.

- Published

- Published 3 May 2026

Key takeaways

- Global enterprise software spending is forecast to reach $1.25 trillion in 2025, growing 14% year over year (Gartner, 2025).

- The average mid-market organisation now runs 112 SaaS applications, up from 80 in 2020, and roughly half of that spend goes underused or unused (Productiv State of SaaS, 2024).

- The DORA 2024 report found that elite delivery teams ship change with 127x lower failure rates, but team capacity, not tooling, is the strongest predictor of where a build can succeed (Google/DORA, 2024).

- For ~9 in 10 decisions inside a 50-300 person B2B company, the honest answer is buy or buy-and-extend. Custom builds win when the capability is strategically central, no mature SaaS covers the core, and the team can operate the system for five years.

- "Total cost" is the wrong frame if it stops at licences or salaries. Integration, change management, and the cost of leaving a vendor often dominate.

TL;DR

Build vs buy is not a values question. It is a four-axis scoring exercise — strategic importance, differentiation, five-year total cost, and team capacity — applied to a specific capability inside a specific company at a specific moment. Score each axis from 1 to 5, multiply by the weights below, and the answer is usually obvious. When the score lands in the middle, the right move is rarely "build everything"; it is buy a strong base and extend the seams that matter. The framework below is the one we use on engagements at companies between 50 and 300 people, with a worked example for a typical mid-market operations system.

Why "build vs buy" is harder in 2026 than it was in 2018

Two things changed at once. First, the SaaS market got dense. A 2024 BetterCloud benchmark put average application count at 131 apps for mid-market companies and 211 for enterprise, with most of the growth coming from departmental purchases that never crossed an architecture review (BetterCloud State of SaaSOps, 2024). When you can buy a credible tool for almost any business problem, the bar to justify a build moves up.

Second, AI-assisted engineering compressed the cost of writing code without compressing the cost of running it. Stack Overflow's 2025 developer survey reported 84% of developers now use or plan to use AI coding tools, but trust in AI accuracy has dropped to 29%, down 11 points year over year (Stack Overflow, 2025). The implication for build-vs-buy: code creation is cheaper, but code operation, security review, and long-tail maintenance are not. The five-year cost curve has not flattened.

Together, these shifts narrow the legitimate domain for custom software. The classical answer — "build what is differentiating, buy the rest" — is still right. The work is being honest about which is which.

The four-axis framework

Score each axis from 1 (low) to 5 (high). Multiply by the weight. Total out of 100.

| Axis | What it measures | Weight |

|---|---|---|

| Strategic importance | How directly does this capability touch revenue, margin, or differentiation? | 30 |

| Differentiation potential | Could doing this materially better than competitors create a durable advantage? | 25 |

| Five-year total cost ratio | Build cost divided by buy cost, normalised to a 5-year horizon | 25 |

| Team capacity | Can the team realistically operate this for five years without becoming the bottleneck? | 20 |

Total ≥ 70 → build is honestly on the table. 50-70 → buy-and-extend. < 50 → buy. The cutoffs are not magic; they are the thresholds where the conversation changes from "should we" to "how" — or stops entirely.

Axis 1: Strategic importance

Ask one question: if this capability disappeared tomorrow, what fraction of the business would stop? A pricing engine for a B2B marketplace scores 5. A vendor-management portal scores 2. Internal tooling for a finance team scores 1, even when it feels critical day to day.

Common mistake: scoring high because a system is used heavily, not because it is strategically central. Heavy use makes a system valuable to operate well. It does not make it strategic. A 4 or 5 should require that the capability is part of how the company makes money or how it is observably better than competitors.

Axis 2: Differentiation potential

This is the axis CTOs tend to overweight. Differentiation potential asks whether the company could do this materially better than the SaaS option, in a way customers would notice or pay for.

A useful filter: if the same outcome could be reached by configuring an existing tool aggressively and paying for the seats, the differentiation potential is at most 3. Real 4s and 5s involve workflow, data, or model choices that no off-the-shelf tool will let you make.

Axis 3: Five-year total cost ratio

The single most-mistaken number in build-vs-buy is total cost. The build column undercounts; the buy column ignores switching cost. A defensible five-year model includes:

Build column:

- Engineering build cost (often 1.6-2.5x first-year run rate due to discovery and rework)

- Operations cost (on-call, SLAs, infrastructure, security review)

- Maintenance cost (typically 15-25% of build cost per year, sometimes higher in regulated domains)

- Integration cost (usually underestimated by 40-60%)

- Knowledge risk cost (probability-weighted cost of losing a key engineer)

Buy column:

- Licence and seat cost over 5 years (account for stepped pricing as the company grows)

- Implementation and configuration cost

- Integration and middleware cost

- Vendor management and procurement load

- Change cost when the vendor pivots, gets acquired, or sunsets

- Exit cost — what it would take to leave in year 4 or 5

A 2024 Flexera report found between 32% and 38% of cloud and SaaS spend was wasted across mid-market organisations, much of it from over-provisioned licences and shelfware (Flexera 2024 State of the Cloud, 2024). That waste belongs in the buy column at honest companies.

The output of this axis is a ratio: build cost / buy cost. A ratio under 1.5 makes build interesting. Above 3.0, build is a vanity exercise unless the other axes are screaming.

Axis 4: Team capacity

The axis nobody wants to score honestly. Team capacity asks: can your engineering organisation build this without losing momentum on what already pays the bills, and operate it for five years?

Three sub-questions force an honest score:

- Do you have engineers who have shipped something at this scope before?

- Will the build not require pulling 20%+ of your senior engineering capacity off product work for 6+ months?

- If the engineer who designed it leaves, can the rest of the team operate and extend it?

Two yeses out of three is a 4. Three yeses is a 5. One yes is a 2. Zero is a 1, and the build conversation should end there regardless of the other axes.

The DORA 2024 research found that AI adoption correlated with a 1.5% decrease in delivery throughput and a 7.2% decrease in delivery stability, suggesting that velocity gains from tooling are not free (Google/DORA, 2024). Capacity is real; it does not get cheaper because the IDE got smarter.

A worked example: a 120-person B2B picks an operations system

A 120-person B2B logistics company is choosing how to run order orchestration: ingest orders from 14 customer integrations, route to fulfilment partners, surface exceptions, and report SLAs. The team has three options: buy a TMS-grade SaaS, build a custom orchestrator, or buy a base platform and extend.

Strategic importance: 4 (× 30 = 120)

Order orchestration is directly customer-facing and SLA-bound. A failure here costs revenue, not just embarrassment. It is not the unique edge of the business — that lives in routing intelligence — but the orchestrator carries the value. Score 4, not 5: the company would still exist if a vendor ran this, but the customer experience and margin both depend on it.

Differentiation potential: 3 (× 25 = 75)

A reasonable SaaS TMS gets to ~80% of the workflow. The remaining 20% — bespoke exception logic, customer-specific SLAs, the routing scoring model — is where the company believes it can outperform peers. That 20% is the differentiating layer; the orchestrator under it is plumbing. Score 3.

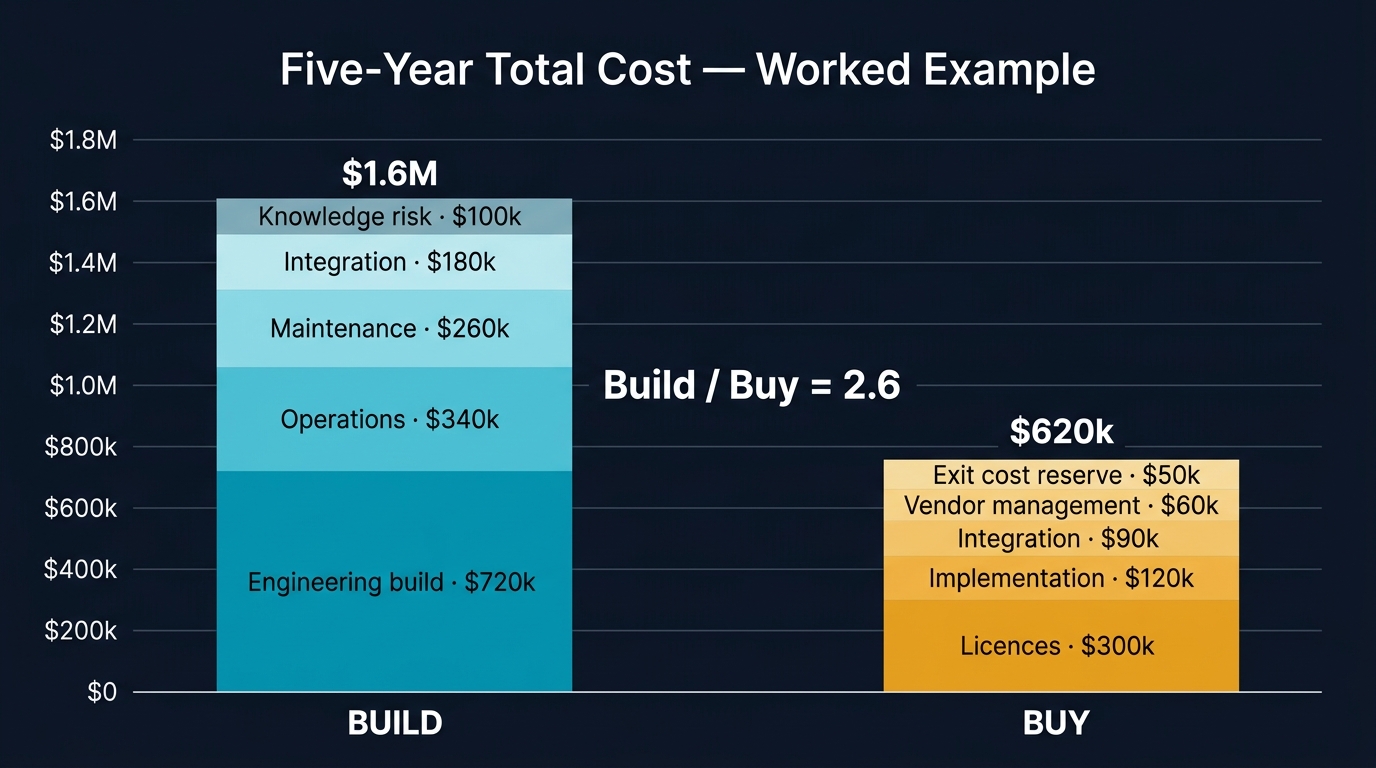

Five-year total cost ratio: 2 (× 25 = 50)

Build estimate: $1.6M total cost of ownership over 5 years (3 engineers' time for build, 2 engineers' partial time for run, infrastructure, integration). Buy estimate: $620k over 5 years (licences scaling with order volume, implementation, integration). Build / buy = 2.6.

That ratio is in the "expensive build" zone. Score 2 — meaning the cost axis is actively pushing the decision toward buy.

Team capacity: 3 (× 20 = 60)

The team has shipped a smaller orchestration system before, but doing this at higher reliability would consume two of three senior engineers for six months. The product roadmap would slip. Score 3.

Total: 305 / 500 (61%)

That number lands squarely in the buy-and-extend band. The decision in this engagement was to buy a platform that handled ingest, routing, and reporting, and extend the exception-handling and customer-specific SLA logic in-house. The differentiating 20% is custom code; the boring 80% is a vendor's problem to operate.

This is the answer most build-vs-buy exercises arrive at when they are run honestly, and it is the answer the loudest voices in the room often dislike — because it requires picking a vendor, accepting their constraints, and writing the integration code that makes the seams sing.

When build is honestly the right call

Three conditions, all required:

- The capability is strategically central (axis 1 ≥ 4) and the company believes it can do it materially better (axis 2 ≥ 4).

- No mature SaaS covers the core. "Mature" means at least three reference customers at your size and operating profile, an API surface that handles your edge cases, and a public 12-month roadmap that does not contradict your direction.

- The team can operate it (axis 4 ≥ 4). Not "could in theory hire to operate it." Today's team, plus realistic hiring.

Examples where this lands cleanly:

- A pricing engine at a B2B marketplace where pricing is the product.

- A clinical decision support layer in a healthtech where the medical model is proprietary and regulated.

- A risk model in a fintech where the differentiator is the data and the math.

Examples where it usually does not, despite enthusiasm:

- A "better Salesforce" for the sales team. Almost never.

- An internal time-tracking system. Buy.

- A document management system for the legal team. Buy.

- A bespoke CMS for marketing. Buy a headless CMS, extend the editor experience.

When buy is the right call — and how to buy well

For most decisions, buy wins. The harder skill is buying well. Three rules from real engagements:

Buy on the workflow, not the feature list

Vendor demos are choreographed against feature checklists. Switch frames in the evaluation: ask the vendor to walk through your end-to-end workflow, including the failure cases. Watch where the demo gets quiet. That is where the integration debt will live.

Negotiate exit before signing entry

What does it cost to leave? Three concrete questions:

- Can we export our data in a structured, complete format on demand, and is that contractually guaranteed?

- What APIs let us run our own reporting outside the vendor surface?

- What is the migration burden in year 4 if the vendor pivots away from us?

Vendors that answer these questions cleanly are vendors worth buying. Vendors that get evasive are buying you, not the other way around.

Treat configuration debt like code debt

Heavy SaaS customisation creates a parallel codebase you do not own. Decide upfront which configurations are reversible (workflows, fields, automations) and which are not (data model decisions, integration shape, identity model). Document the irreversible ones. Review them on the same cadence as your code.

The third option: buy and extend

The framework's middle band — 50-70 points — is where most decisions actually live. Buying a base platform and extending the seams that matter is the answer there, and it has a deserved reputation for going wrong. Three patterns make it work:

- Pick the platform on its API surface, not its UI. The UI exists for the SaaS company's roadmap. The API exists for yours.

- Build extensions as a separate service, not as plugins inside the vendor's runtime. When the vendor changes, your extensions do not need to change.

- Own the data integration. Replicate the data you care about into your own warehouse. Read from there for analytics, reporting, and ML. Never let the vendor's reporting layer become the source of truth for executive decisions.

The buy-and-extend pattern is also where the cost model most often surprises teams. The licence is the smallest line. The integration code, the data replication, and the ongoing reconciliation cost dominate. Budget accordingly.

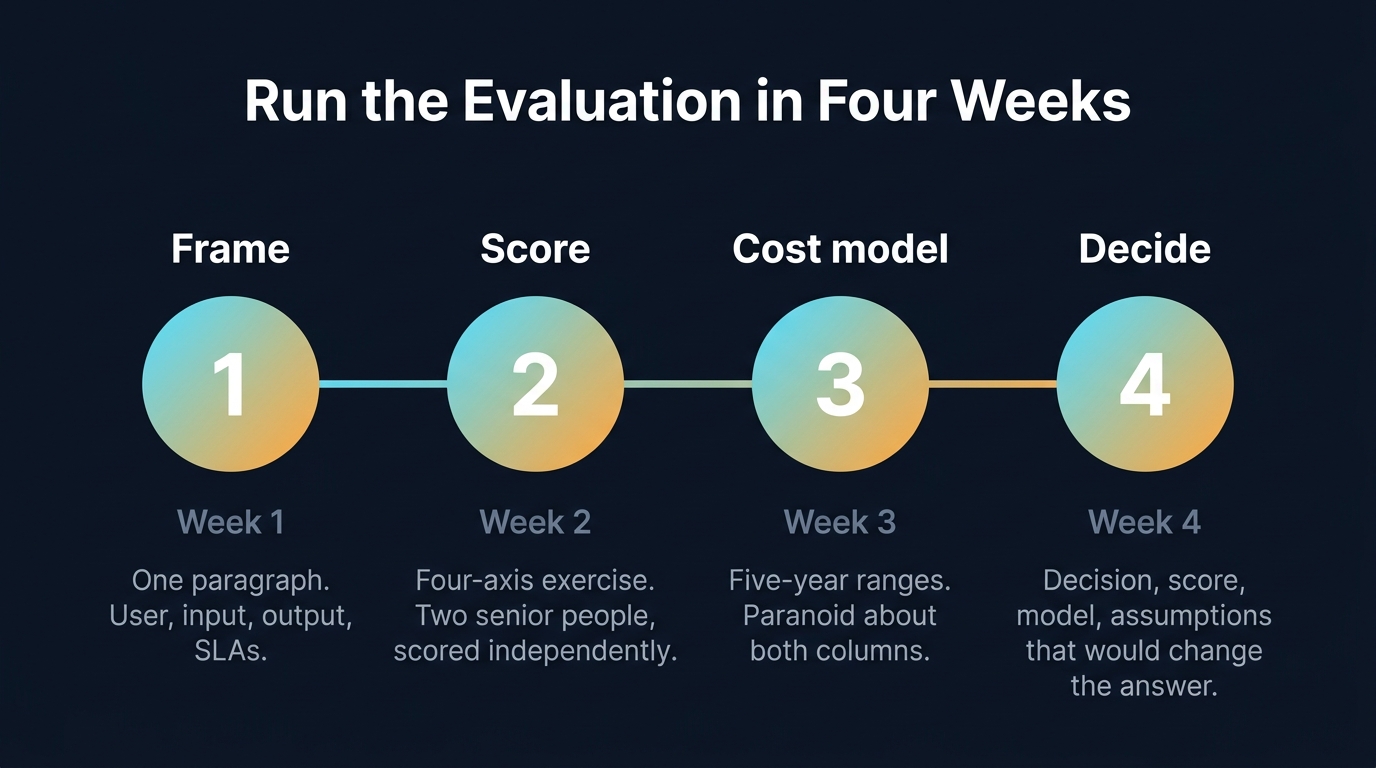

How to actually run this evaluation in 4 weeks

Most build-vs-buy decisions stall because the evaluation is open-ended. A four-week structure forces the work to land:

Week 1 — Frame. Write the capability description in one paragraph. Define the user, the input, the output, the SLAs. Stop scoping. Start scoring.

Week 2 — Score. Run the four-axis exercise with two or three senior people independently. Compare scores. Disagreements are the signal — they reveal where you do not yet know enough to decide.

Week 3 — Cost model. Build the five-year cost model for both columns. Be paranoid about the buy column. Be even more paranoid about the build column. Use ranges, not point estimates.

Week 4 — Decide and write it down. Document the decision, the score, the cost model, and the assumptions that would change the answer. The last point matters: a decision is only honest if you can describe what would force you to revisit it.

A four-week ceiling forces the decision to be made on the same data the team has now, not on data they wish they had. Companies that take longer than four weeks rarely come back with better information; they come back with a different cast of opinions.

What we got wrong on this framework over the last 18 months

A few honest revisions, because frameworks that never change are usually not being tested:

We used to weight differentiation higher. It pulled too many decisions toward build that, in practice, were better served by buy-and-extend. The 25% weight is now more honest about how rarely true differentiation is durable.

We used to score team capacity last. We now score it earlier, because once a build is partially scoped it is psychologically harder to kill on capacity grounds. Killing a build because the cost is high is fine. Killing it because the team genuinely cannot operate it is harder, and people delay it.

We underweighted exit cost. A decade of vendor consolidation has changed the calculus. Datadog acquiring observability companies, Atlassian sunsetting server, Salesforce pricing changes — exit cost is no longer a tail risk. It is a base case.

Frequently asked questions

How is this different from "build what differentiates, buy the rest"?

It is the same principle, made specific enough to score. The classical phrasing leaves "differentiates" undefined, which is where most decisions go wrong. The four-axis version forces a number on what would otherwise be a hand-wave.

Where does AI-generated code change the math?

It compresses build cost on the input side and increases operational and review cost on the output side. The net effect on five-year TCO is small for most non-trivial systems. AI helps build velocity. It does not change the operating cost curve, which is where most build-vs-buy decisions are decided over five years.

Should we use this for every decision?

No. Run it for capabilities where the build column would cost more than ~$300k over five years, or where the system would be operated by a team of three or more. Below that, the question is usually "buy a tool and move on."

What if the team really wants to build it?

Score it honestly anyway. If it lands in the buy band, the question to ask the team is what part of the work is genuinely interesting — and is there a buy-and-extend version where the interesting part stays in-house. Often there is.

The decision is rarely the hard part

Most build-vs-buy decisions have a clear answer once the four axes have been scored honestly. The hard part is the honesty: scoring strategic importance without ego, scoring differentiation without optimism, scoring cost without optimism either, and scoring team capacity without flattery.

When companies bring us in for this evaluation at DevLume, the most useful thing we add is not the framework itself — it is the disinterest. We do not have a stake in whether you build or buy. The team often does, and that is exactly why a structured score, written down before the cost model is built, tends to produce decisions that hold up two years later.

If you are in the middle of one of these calls and want a second pair of eyes on the score, that is the conversation to have before the cost model, not after.